First Cross-Universe Character Mixing: MiMiX Puts Mr. Bean in Tom and Jerry

What if Mr. Bean could step into Tom and Jerry's world - and still look like Mr. Bean?

What if Mr. Bean could step into Tom and Jerry's world—and still look like Mr. Bean? Current text-to-video models struggle with this seemingly simple request. When characters from different fictional universes are mixed, the results typically suffer from "style delusion": realistic characters appear cartoonish, or cartoon characters look unnaturally photorealistic. Building on the video generation capabilities of Wan2.1 [1] and extending beyond single-subject personalization approaches like DreamVideo [4], researchers from MBZUAI introduce MiMiX, a framework that enables natural interactions between characters who never coexisted in training data.

The core challenge is twofold. First, characters from different shows—say, Jerry from Tom and Jerry and Mr. Bean from the British sitcom—never appear together in any training dataset. Second, mixing cartoon and live-action styles tends to corrupt the visual identity of one or both characters. Unlike SkyReels-A2 [2], which uses image references with cross-attention fusion, MiMiX learns from extensive video footage with character-annotated captions, enabling richer motion and behavior modeling. This trade-off is important for practitioners: MiMiX gains better motion and behavior learning from video data but loses zero-shot capability—each new character set requires LoRA fine-tuning.

The Two-Part Solution

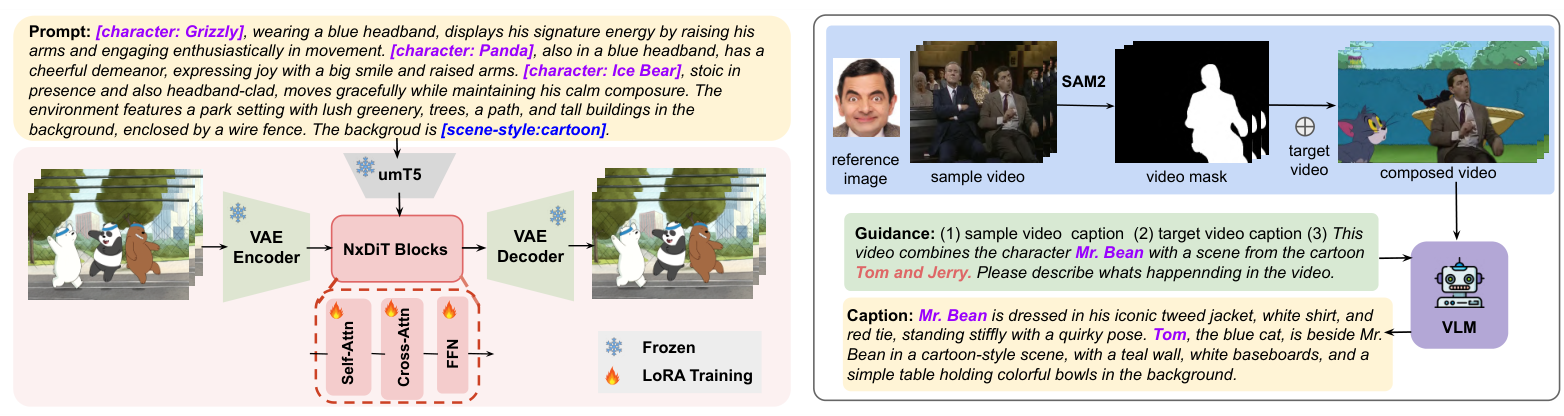

MiMiX addresses the cross-universe challenge through two complementary techniques: Cross-Character Embedding (CCE) and Cross-Character Augmentation (CCA).

Cross-Character Embedding introduces a character-action prompting format that explicitly disentangles character identity from scene context. Instead of describing a scene as "a cat chasing a mouse in a kitchen," the system uses structured prompts like [Character: Tom], chases [Character: Jerry], in a kitchen [scene-style: cartoon]. This explicit tagging allows the model to learn identity and behavioral patterns separately from scene descriptions.

The caption generation process leverages GPT-4o with 10 sampled frames, dialogue transcripts, and source metadata from four TV shows spanning both cartoon and realistic domains: Tom and Jerry and We Bare Bears (cartoon), plus Mr. Bean and Young Sheldon (live-action). The resulting 81-hour dataset captures 10 characters across these visual styles.

Cross-Character Augmentation tackles style delusion through synthetic cross-domain compositing. Using SAM2 [6] for character segmentation, the system extracts characters from their original videos and places them into backgrounds from opposite style domains—cartoon characters into realistic backgrounds and vice versa. A VLM then generates captions for these composed videos, creating training examples where characters from different style domains naturally coexist.

A key finding from ablation studies reveals that only ~10% augmented synthetic data is optimal. Excessive synthetic data degrades realism and overall video quality, while too little leaves the model vulnerable to style delusion when mixing domains.

MiMiX ArchitectureThe framework consists of LoRA fine-tuning on Wan2.1 (left) and a data augmentation pipeline using SAM2 segmentation and VLM captioning (right).

MiMiX ArchitectureThe framework consists of LoRA fine-tuning on Wan2.1 (left) and a data augmentation pipeline using SAM2 segmentation and VLM captioning (right).

Technical Architecture

The framework fine-tunes Wan2.1-T2V-14B using LoRA [7] adaptation. The architecture consists of a VAE Encoder, NxDiT (Diffusion Transformer) Blocks with Self-Attention, Cross-Attention, and FFN modules, and a VAE Decoder. Text prompts are processed through umT5. During fine-tuning, backbone parameters remain frozen while only LoRA layers (rank-32) are updated for 5 epochs.

At inference, the same prompting scheme enables composition of characters who never appeared together in training. Although Mr. Bean and Jerry never meet in the dataset, the model has observed how each interacts with others in their respective domains, allowing it to generalize to cross-universe interactions.

Results: Outperforming SkyReels-A2 Across All Metrics

Evaluation was conducted on the VBench benchmark [8] and custom VLM-based metrics using Gemini-1.5-Flash for character-specific assessment. MiMiX achieves strong improvements across all measured dimensions.

On VBench metrics, MiMiX achieves 0.1893 Consistency (vs. 0.1469 for SkyReels-A2), representing a 28.9% improvement. The Dynamic score reaches a perfect 1.0 on single-subject generation, a 27.5% improvement over SkyReels-A2's 0.7843.

Multi-Subject ComparisonMiMiX (bottom) vs SkyReels-A2 (top) across four scenarios: characters fishing, at a bakery, having tea, and at an aquarium. MiMiX maintains distinct character identities and styles.

Multi-Subject ComparisonMiMiX (bottom) vs SkyReels-A2 (top) across four scenarios: characters fishing, at a bakery, having tea, and at an aquarium. MiMiX maintains distinct character identities and styles.

On custom VLM metrics designed to assess character fidelity, MiMiX shows +18.9% improvement on Motion-P (single-subject) and +20.9% (multi-subject), indicating better preservation of character-specific movement patterns. Style-P improves by +15.6% for multi-subject scenarios, demonstrating robustness against style delusion when mixing cartoon and realistic characters.

The Interaction-P metric, measuring quality of character interactions, shows +6.8% improvement for single-subject and +5.7% for multi-subject settings compared to SkyReels-A2.

Research Context

This work builds on Wan2.1 [1] as the foundational video generation backbone and extends the subject personalization paradigm established by DreamVideo [4] and VideoBooth [5] to multi-character scenarios.

What's genuinely new:

- The character-action prompting format with explicit

[character:name]tags enabling disentangled identity learning - Cross-Character Augmentation using synthetic cross-domain compositing to address style delusion

- The discovery that only ~10% augmented synthetic data is optimal for cross-style robustness

- First framework specifically designed for multi-character mixing across different fictional universes with style preservation

For scenarios requiring arbitrary new characters without retraining, Movie Weaver [3] or Video Alchemist [10] offer tuning-free alternatives at the cost of weaker behavioral modeling.

Open questions:

- Can this approach scale to open-world settings with user-defined or arbitrary characters?

- How does performance degrade with fewer training hours per character?

- Would the framework work for characters from non-Western media (anime, Chinese animation)?

Limitations and Trade-offs

The framework requires per-character LoRA fine-tuning, meaning new characters cannot be added without retraining. This limits scalability in open-world settings where users want arbitrary or user-defined characters. The evaluation is also limited to 10 characters from 4 TV shows—scalability to broader scenarios remains untested.

Occasional failure cases occur in highly complex interaction scenes with multiple characters having overlapping appearances or motion patterns. The reliance on explicit identity annotations means each new domain requires curated character-annotated training data.

Despite these limitations, MiMiX represents a significant step toward generative storytelling where beloved characters from different fictional universes can interact naturally—opening new possibilities for creative content production and fan-generated media.

Check out the Paper, GitHub, and Project Page. All credit goes to the researchers.

References

[1] Team Wan. (2025). Wan: Open and Advanced Large-Scale Video Generative Models. arXiv preprint. arXiv

[2] Fei, Z. et al. (2025). SkyReels-A2: Compose Anything in Video Diffusion Transformers. arXiv preprint. arXiv

[3] Liang, F. et al. (2025). Movie Weaver: Tuning-Free Multi-Concept Video Personalization with Anchored Prompts. arXiv preprint. arXiv

[4] Wei, Y. et al. (2024). DreamVideo: Composing Your Dream Videos with Customized Subject and Motion. CVPR 2024. arXiv

[5] Jiang, Y. et al. (2024). VideoBooth: Diffusion-based Video Generation with Image Prompts. CVPR 2024. Paper

[6] Ravi, N. et al. (2024). SAM 2: Segment Anything in Images and Videos. arXiv preprint. arXiv

[7] Hu, E. J. et al. (2022). LoRA: Low-Rank Adaptation of Large Language Models. ICLR 2022. arXiv

[8] Huang, Z. et al. (2024). VBench: Comprehensive Benchmark Suite for Video Generative Models. CVPR 2024 Highlight. arXiv

[9] OpenAI. (2024). Video Generation Models as World Simulators (Sora). OpenAI Technical Report.

[10] Chen, T.-S. et al. (2025). Video Alchemist: Multi-subject Open-set Personalization in Video Generation. arXiv preprint. Project